Link Detox Smart (DTOX2)

Find and Disavow Toxic Links.

Recover and protect your rankings from Google Penalties and Manual Actions. Find toxic links that harm your website. Clean up your back link profile. Celebrate a Google Recovery. Protect from Negative SEO.

Features

-

Get automated SEO recommendations.

-

Perform a data-driven link audit with Link Detox Genesis® AI

-

Recover and protect yourself from Google penalties.

-

Speed up Google penalty recovery with Link Detox Boost®.

Benefits

-

Experience and data of 10000s of Google Penalties.

-

Central Disavow File Management and History.

-

Toxic link machine-learning Link Detox Genesis®.

-

Training with Google Spam data.

-

Fancy filters and bulk operations.

LRT Smart - Introduction to the new Dashboard

Discover LRT Smart - The First Walkthrough

Introducing the New Link Audit PDF Report feature in LinkResearchTools

Why Link Detox?

A loss in rankings can harm your online business

Link Detox can help you keep your online business safe and recover your traffic.

The industry standard for backlink audits and Link Risk Management

After Google’s first Penguin update, Christoph C. Cemper created Link Detox - an SEO tool that became the industry standard for link audit and link risk management. Learn more about the benefits of using Link Detox to protect your website.

Features

Unique organic data-driven algorithm

Link Detox Genesis® is an organic data-driven algorithm that assigns a DTOXRISK to every link depending on an aggregated calculation of multiple patterns and risk signals. It helps you assess all your backlinks, recover from Google manual penalties, protect your website from future penalties, and build new strong links.

Link Detox Risk

Link Detox Risk is a unique risk metric calculating the risk of any link and your whole domain (Domain DTOXRISK®). Judging on the link risk of any single link helps you decide which ones to disavow, delete or to keep.

The Original

Link Detox was the first link audit product launched. Since then, we’ve helped thousands of companies recover from their penalties. Link Detox was free for the first eight months, so that also gave us a head start of many billion data points that no other product ever got to or will be able to incorporate into their algorithm.

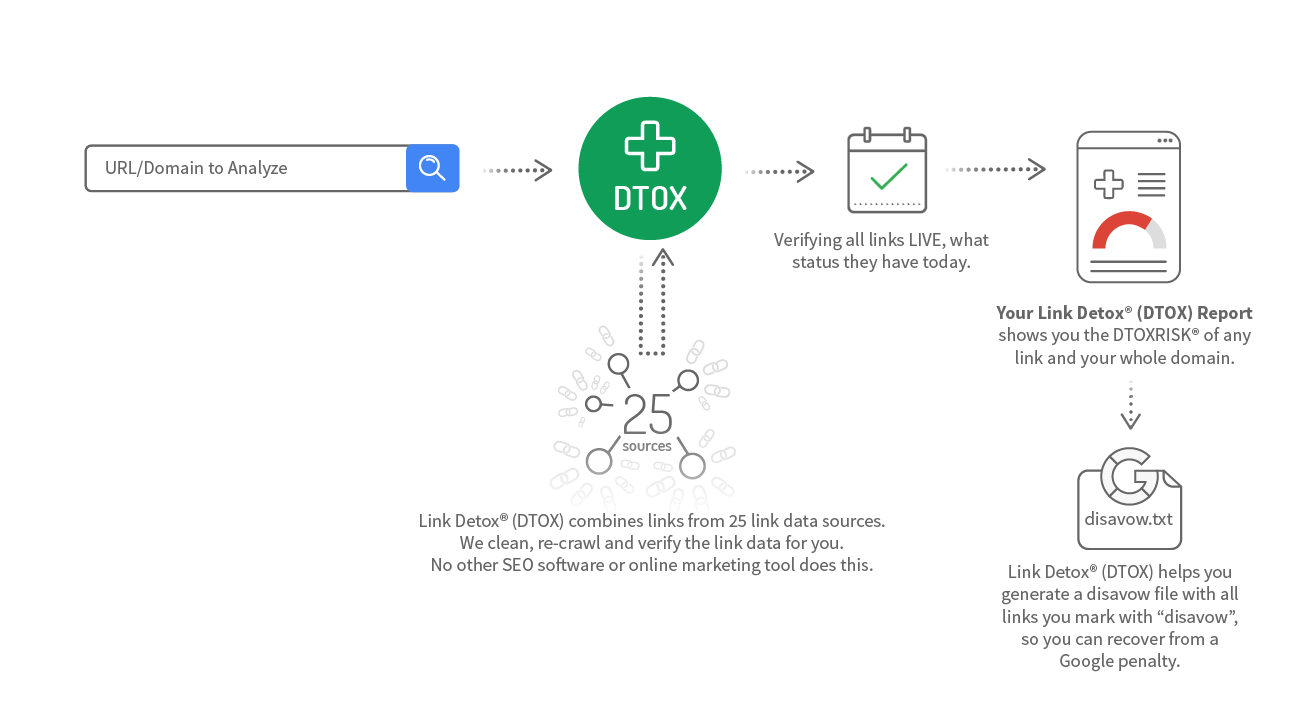

Complete Backlink Profile

Link Detox provides you the most complete backlink profile possible. It’s imperative that you analyze your complete backlink profile of course. Imagine you’d just look one part of your car in the annual service and miss critical issues that could cost you your life. It’s the same with backlink audits.

br>Thanks to the 25 link data sources and the option to connect Google Search Console, Google Analytics, connect your custom link data APIs and even upload any number of links we got you covered. Only you plan limits for the number of links you can audit for a domain

Keyword Intelligence

Link Detox supports keyword intelligence for a decade to understand if a link anchor text is of commercial nature of a brand mention. Anchor Text classification helps understand the risk assessed based on frequency, words and many more factors in Link Detox.

Link Data Cleanup & Recrawl

Combining 25+ link data sources would create a mess in other tools. LRT combines, cleans and recrawls all links before it presents results to you.

That’s the point of using a link analysis software if you look at old data? This problem of inconsistent data in other products was, in fact, the root idea of why we built LinkResearchTools.

Custom Link Data

You can upload any link lists you have to Link Detox to enrich our back link data. While this will help the goal have a full backlink profile audit, it’s not required.

Disavow File Import & Generation

Link Detox supported the Google Disavow file already 7 hours after the official announcement of that feature by Matt Cutts at Pubcon Las Vegas 2012. Since then we’ve gone to great lengths to make the disavow file handling perfect for our users.

Disavow File Management

Every action you apply to your disavow file is managed centrally by Link Detox. We create and maintain your disavow file. No more messing around with text files and manual comparisons. We generate the file in the exact format required by Google.

Disavow File Audit

Let’s face it – clients change, SEOs change. You are the one who takes over a domain with an existing disavow file. The first thing you want to do is perform a disavow file audit. There you might find many useful links that you could undisavow and recover traffic that you lost due to human error in the past or because the web changes daily.

Disavow File History

Link Detox keeps a history of all changes for you in your active account. This helps you keep track of changes you or your team made over time.

Disavow File History works like a version control for links and disavow files. Think of this as a revision history of your disavow file. You can even retrieve the past disavow file with this feature.

Link Simulator

Link Detox works together with the Link Simulator (SIM) that allows you to review link opportunities fast and understand the potential increase in link risk for you.

Google Search Console Integration

The more link data we have, the more precise your analysis will be. You can connect Google Search Console with LRT. We even support multiple GSC properties for the same website a secret tactic to get more linksout of Google Search Console.

Link Detox Tune

You can adjust Link Detox to your needs and opinion. Link Detox Tune is a unique technology that allows you to change the weights of all Link Detox rules for your link audits.

This is especially useful for exotic niches or if you wish to accept the particular risk, e.g. when building a PBN.

Link Screener

You can review your links in the Link Screener in a handy way. Using hotkeys on your keyboard, you can quickly navigate through the backlink profile and decide efficiently which links to disavow and which to keep.

Offlabel use: Blackhat

Some black hat SEOs use Link Detox for finding patterns in their link networks and PBNs. Of course, Link Detox is the leader in finding footprints and unnatural links, so this off-label-use make perfect sense. Don’t try this at home.

Audit NoFollow Links?

NoFollow links can also trigger spam penalties in Google. We support you in your decision to audit them as well, or ignore NoFollow links. You can even start with auditing Follow links first, and then finalize your audit by re-running it for all including NoFollow links. Better be safe than sorry. More about the risk of NoFollow links and the new NoFollow 2.0 here

Find strong and healthy links

Using CDTOX you can prospect for strong and healthy links of your competitors. Of course, all the keyword classifications and data training you did to Link Detox will be used there, too.

Boost Your Disavow and Links

Link Detox fully integrates with Link Detox Boost that helps you recover faster.

How Link Detox Smart works

Learn more about Link Detox Smart

The Interflora Penalty caused quite some attention in the industry. This case study demonstrates use of Link Detox Classic to audit the problems.

Home24 was one of the first victims of the aggressive Google Penguin 2.0 penaly. This case study demonstrates use of Link Detox Classic to find obvious and not so obvious spam problem. Most notable is the new impact that spammy redircts had.

See how Christoph C Cemper used the Link Check Tool (LCT) to check the status of 4,649 spam link examples for 854 domains collected between August 16, 2013, and July 2, 2014. The results will surprise you.

The NoFollow 2.0 attributes introducd changed the game for SEOs... read why.